Deepseek is already giving tough competition to OpenAI’s ChatGPT in the domain of Artificial Intelligence. The Chinese-backed reasoning model became an overnight sensation after it was launched.

Everyone is excited about what the new AI model has to offer and whether it is better than ChatGPT. People around the world have already started using Deepseek on their devices.

However, privacy concerns are being raised by tech enthusiasts. The major question is, what happens to the data that users share with the AI model?

Is it being accessed or monitored by third-party companies, government agencies, etc?

Normally, people have a notion that America-based OpenAI is pretty much secure in terms of protection of user data shared with the AI model.

A similar degree of data privacy may not be possible with a Chinese AI model like Deepseek R1.

Hence, to keep the data shared with the AI chatbot secure, you can locally use the AI tool on your PC.

Advantages of Running Deepseek Locally

Locally using an AI model like Deepseek comes with several advantages.

Due to singular access to the chatbot, you get instant and fast responses from the AI.

There is no “server is busy” issue delaying the response time of the AI model. So, the network dependency is next to none.

In case, you keep encountering the server busy error on Deepseek, fix it using our troubleshooting guide.

Any data shared with the AI remains stored on the device locally and is not shared with any external server. So, this ensures a great deal of data privacy.

Another great benefit of using Deepseek locally is the freedom to select the version of the AI model as per the hardware of your PC.

There is no need to shell loads of money on hosting the powerful version of the AI model on the cloud.

System Requirements to Locally Run Different Versions of Deepseek

Deepseek R1 has various versions that have different system requirements if you are keen to run any of them on your PC.

| Deepseek Model | Parameter | Storage | Memory (Recommended) | GPU(Recommended) |

| Deepseek-R1-Distill-Qwen | 1.5B | 1.1GB | 3.5 GB | NVIDIA RTX 3060/12GB or higher |

| Deepseek-R1-Distill-Qwen | 7B | 4.7 GB | 16 GB | NVIDIA RTX 4080/16GB or higher |

| Deepseek-R1-Distill-Qwen | 14B | 9 GB | 32 GB | Multi-GPU |

| Deepseek-R1-Distill-Qwen | 32B | 20 GB | 74 GB | Multi GPU |

| Deepseek-R1-Distill-Llama | 8B | 4.9 GB | 18GB | Multi-GPU |

| Deepseek-R1-Distill-Llama | 70B | 43 GB | 161 GB | Multi-GPU |

| Deepseek R1 | 671B | 404 GB | 1.3 TB | Multi-GPU |

NOTE: Multi GPU setup indicates using a combination of 4 or more GPUs at a time to facilitate data processing on the AI model.

As you may have noticed, RAM and GPU are very important in facilitating the efficient performance of the AI model.

Concerning the version of AI you choose to use, there is a requirement for powerful memory, storage space, and graphics unit to run the AI model.

If you want to run Deepseek locally which doesn’t require an intensive computing resource, I suggest using the Deepseek-R1-Distill-Qwen-1.5B model.

It is the one in the table with the 1.5B parameter and comparatively lower hardware resource requirements.

How to Install Deepseek on Windows 11 Using Ollama?

Let us set up Deepseek on your PC using Ollama and Chatbox. The steps described in this guide apply to Windows, Mac, and Linux operating systems.

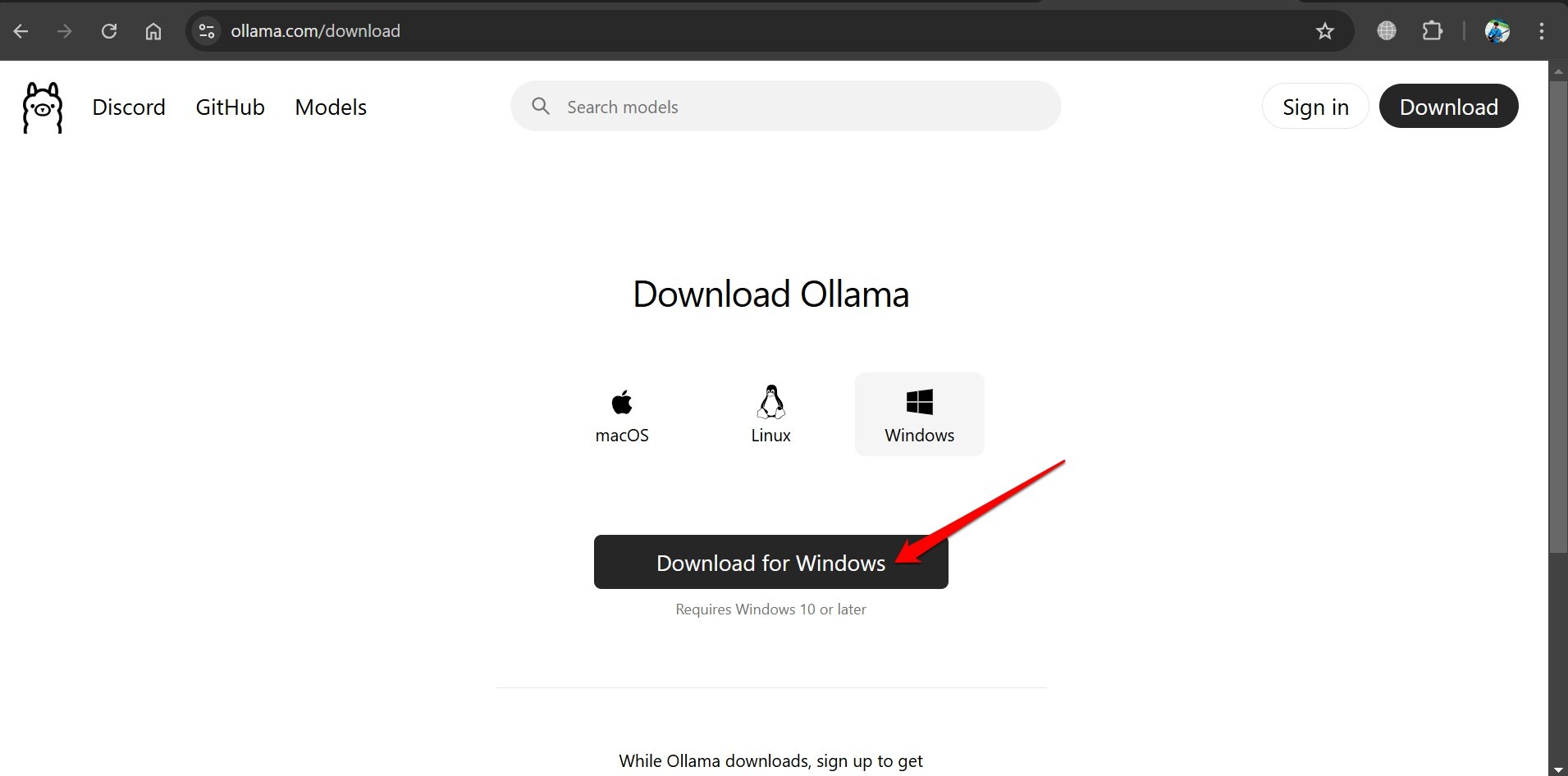

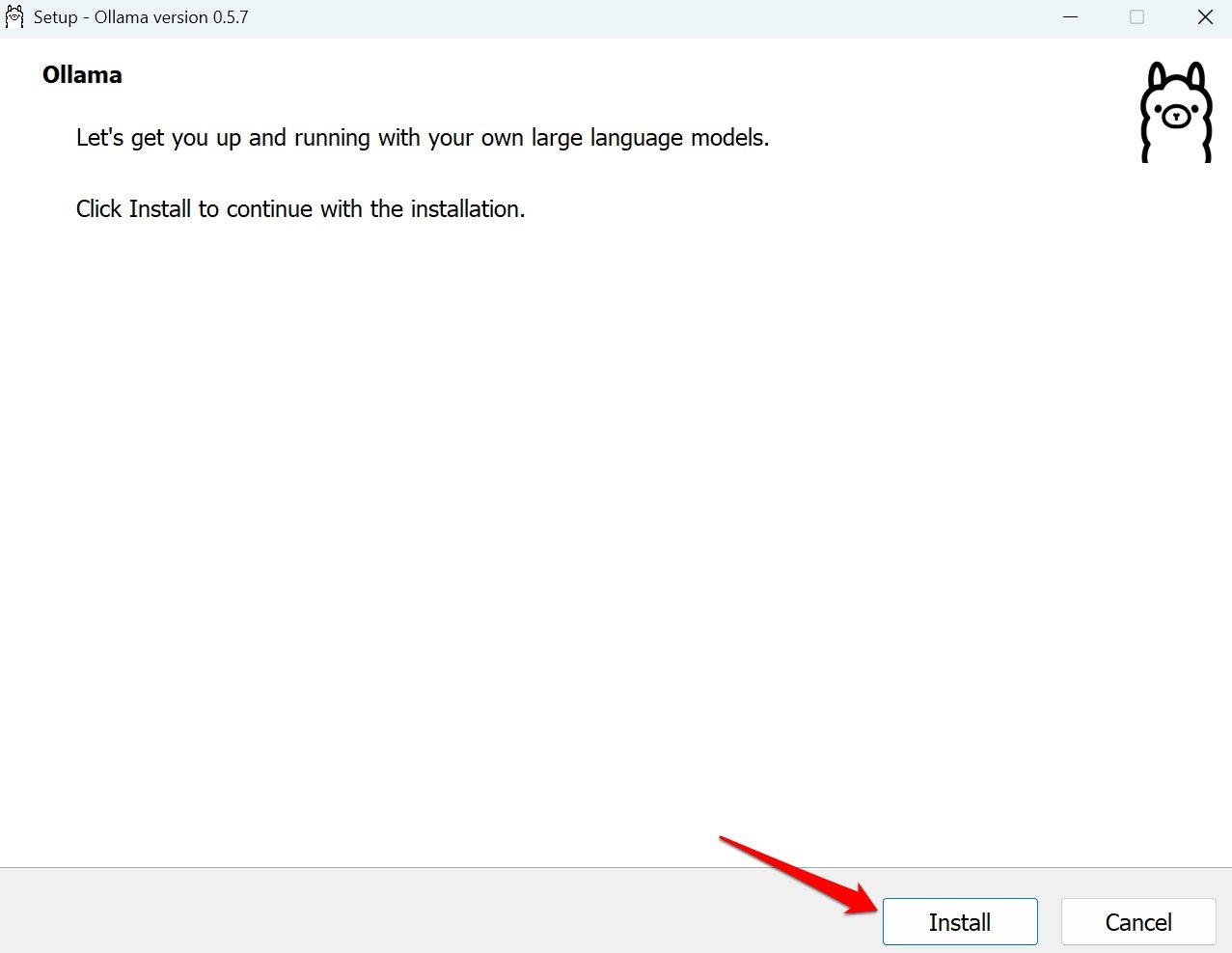

- Go to the Ollama official website.

- Download the Installer for your operating system.

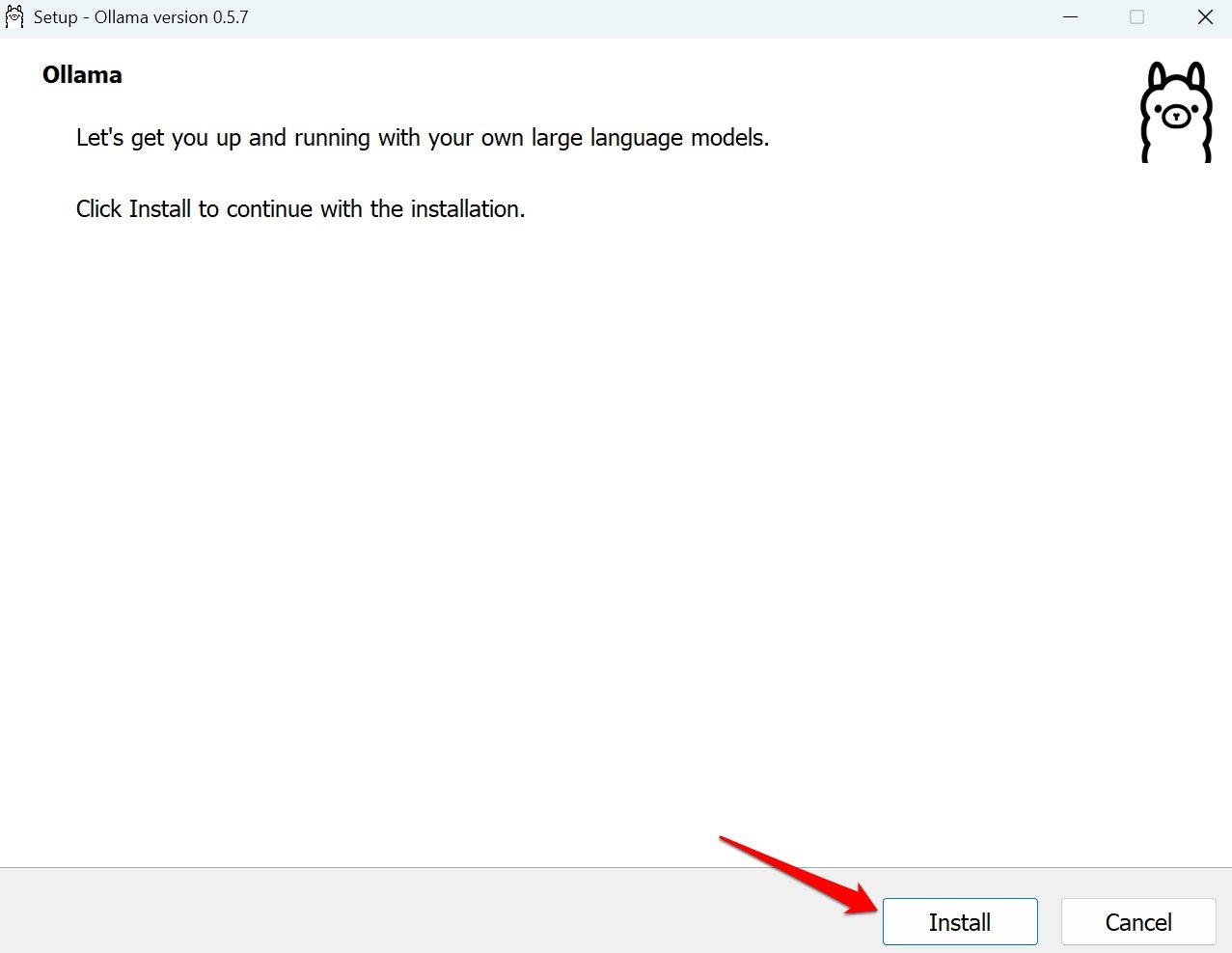

- Run the installer.

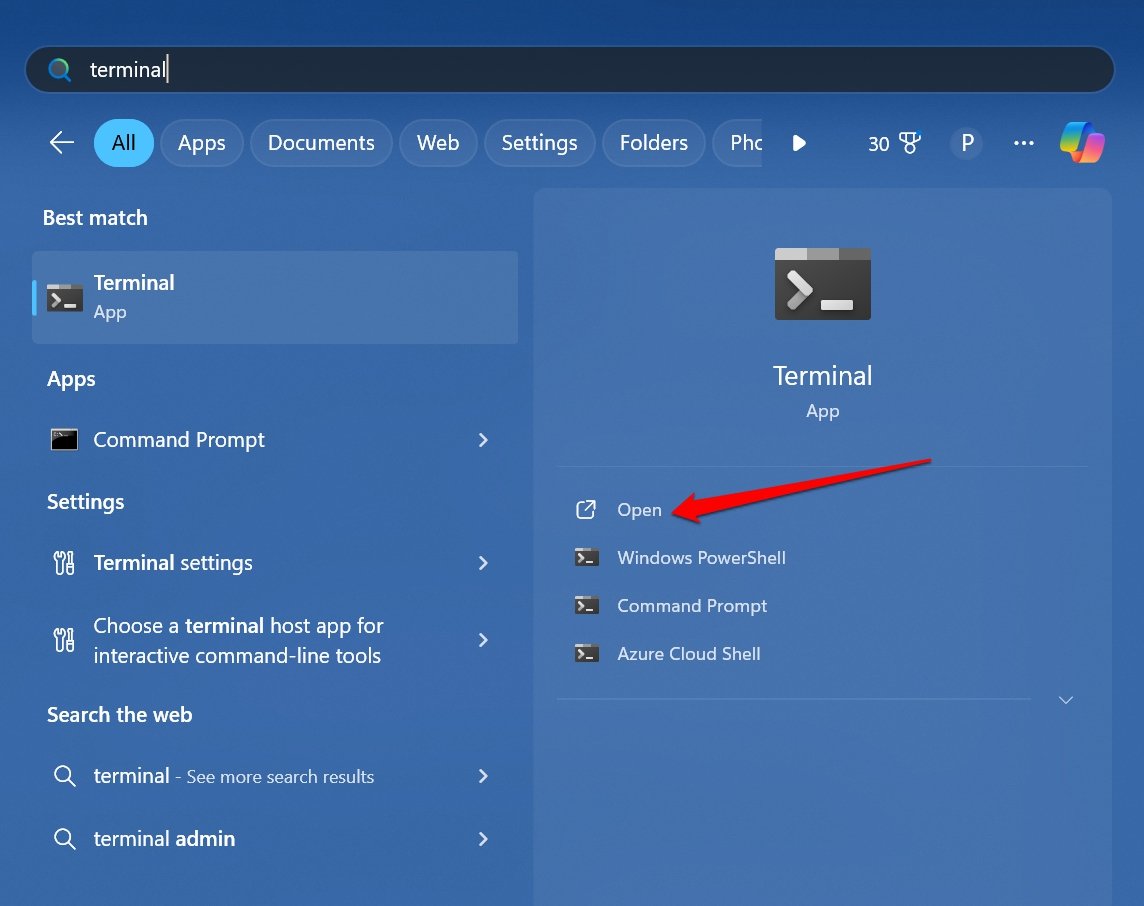

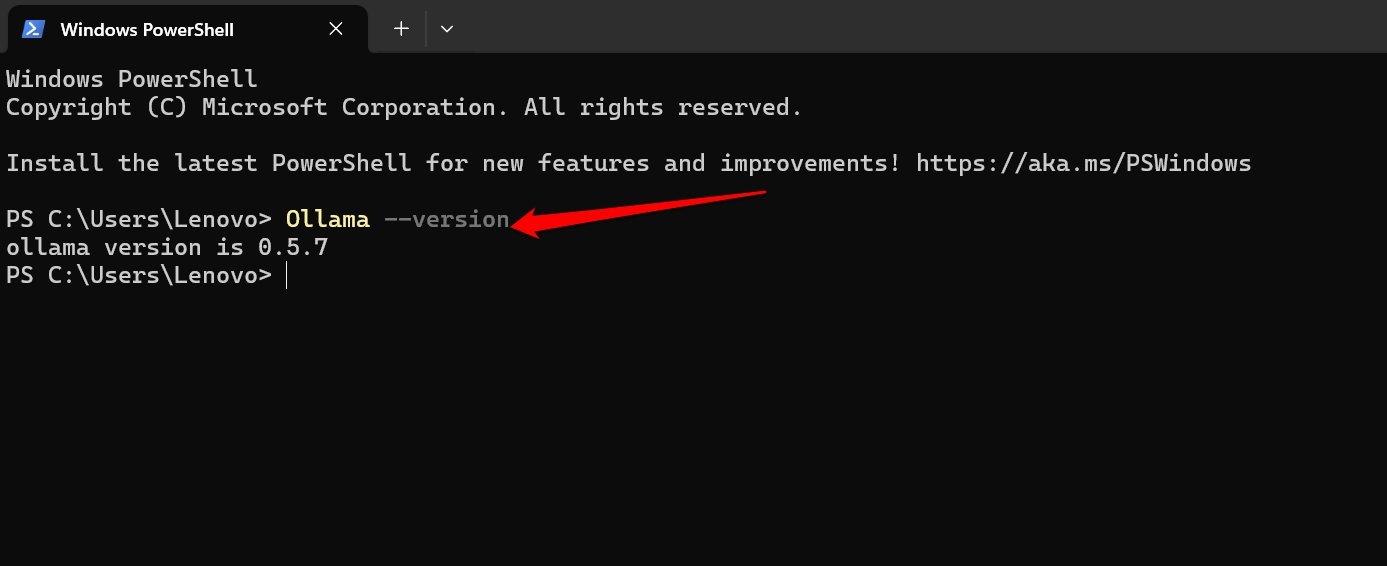

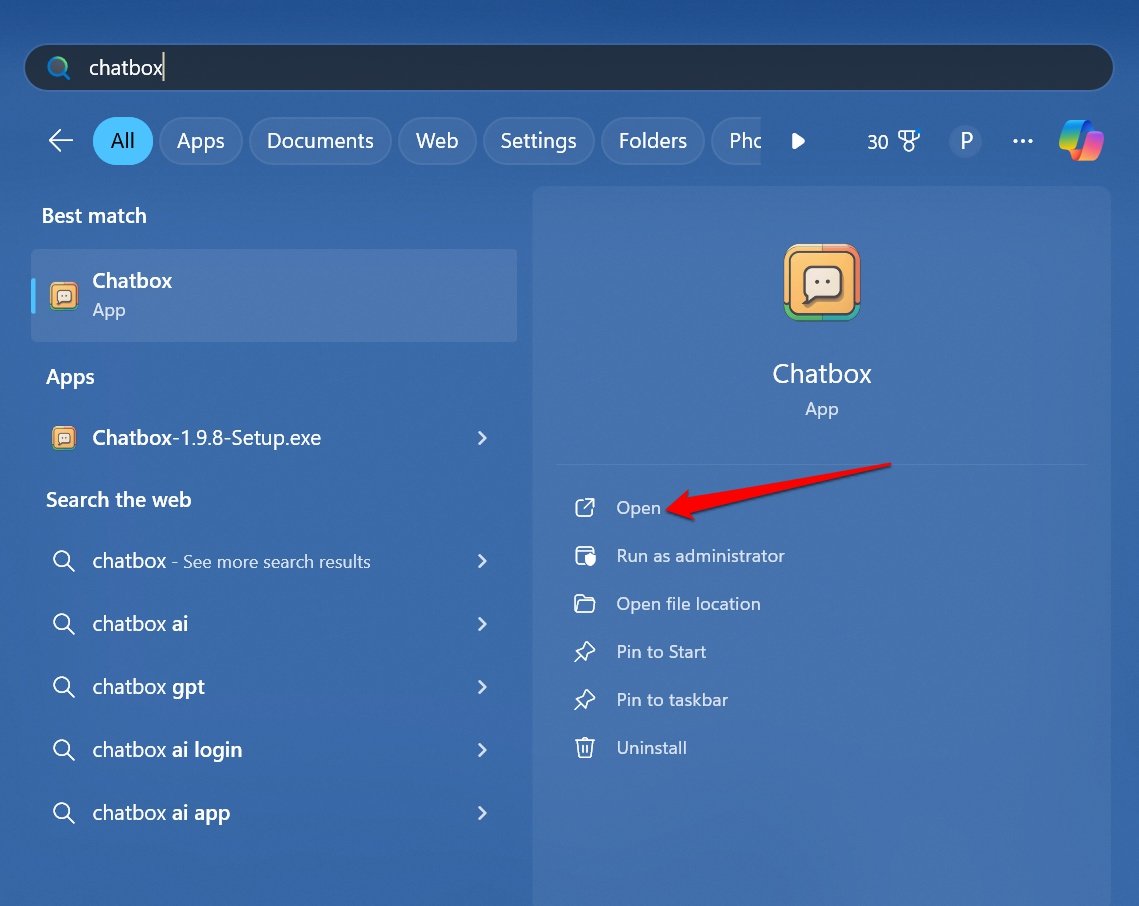

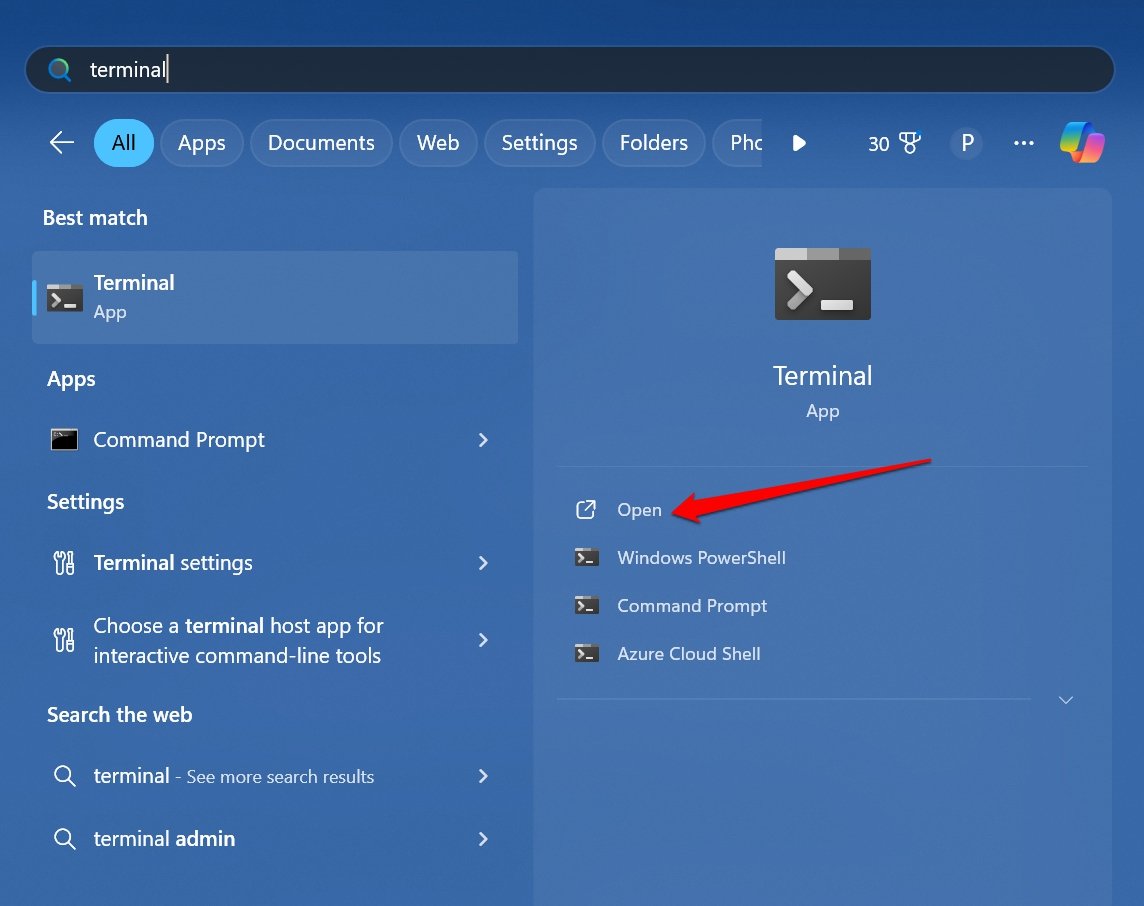

- Press Windows + S to launch the search. Type Terminal and click Open.

- In the Terminal screen type,

Ollama --version

- Press Enter.

The terminal should return the Ollama version number. This means Ollama is ready to use.

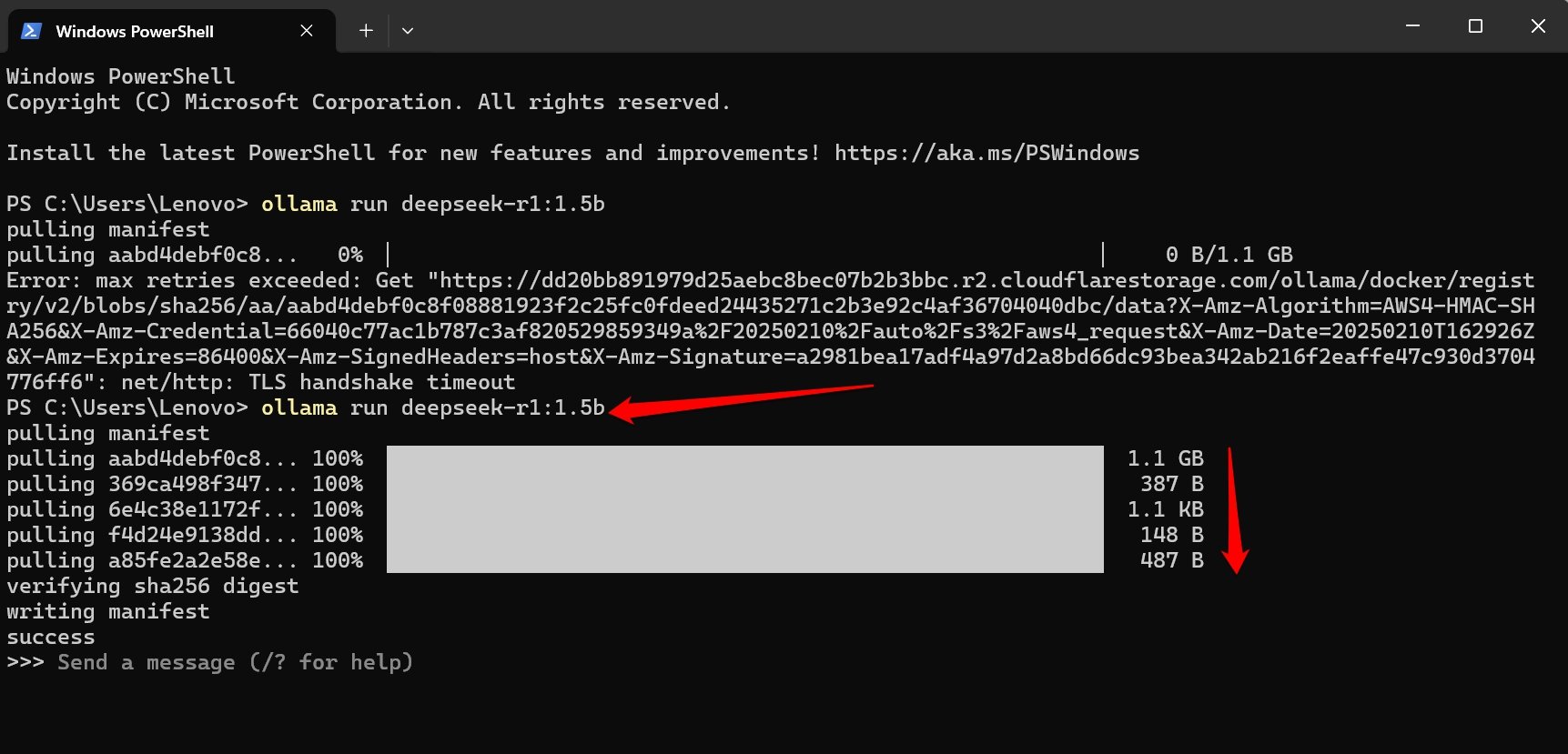

Next, you have to download the Deepseek model of your choice. For example, I will show you how to download the Deepseek-R1-Distill-Qwen with a 1.5 B parameter.

- Access the Terminal and write this command.

Ollama -run Deepseek r1: [AI model parameter]

Replace the AI model parameter in the above command with the actual value.

For instance, type Ollama -run Deepseek r1:1.5B if you intend to use the 1.5B parameter version of the AI model.

The AI model will now start to download. The progress will be visible on the Terminal screen.

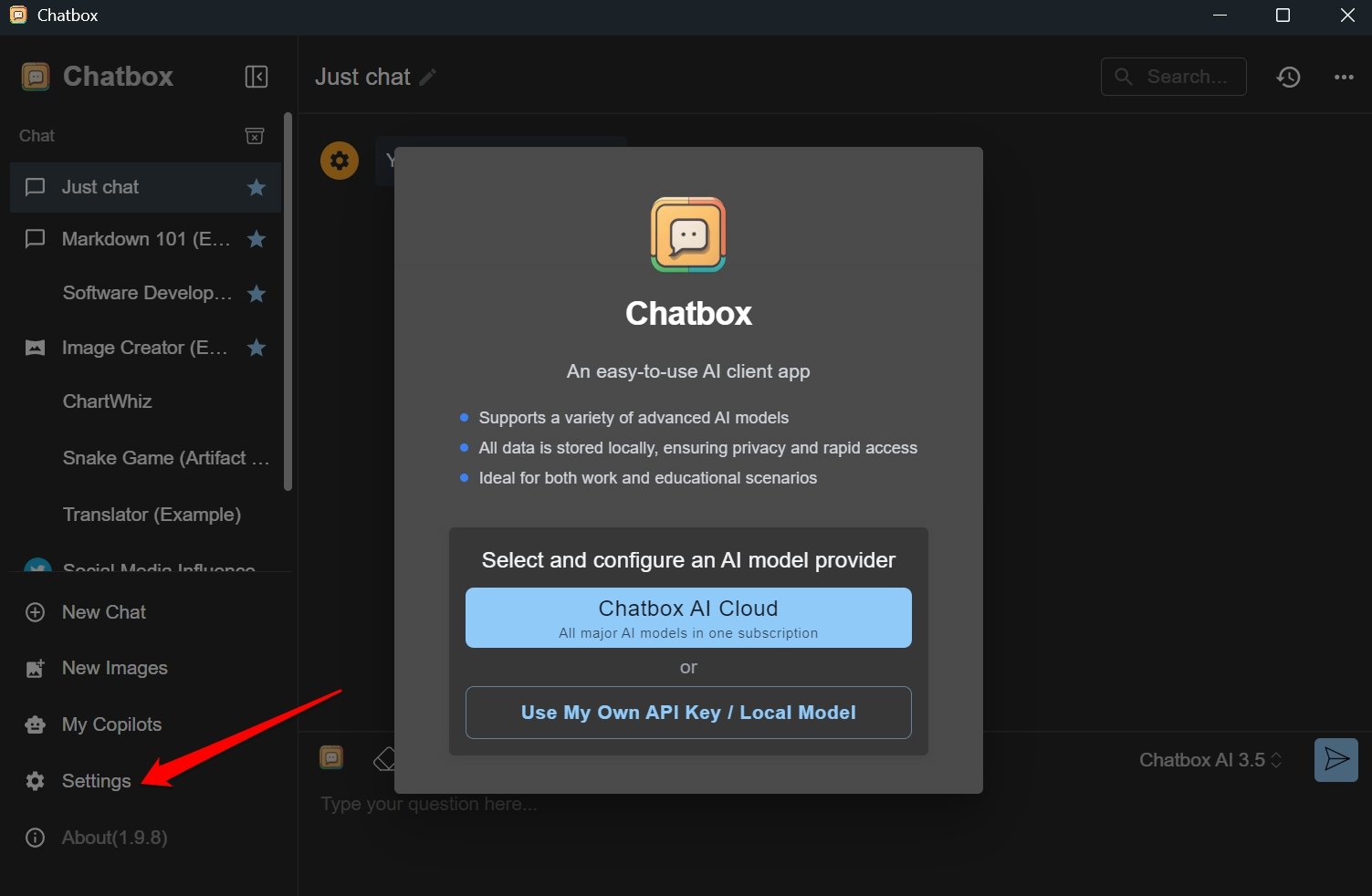

After installing Deepseek, proceed to install the chat interface. Let us use Chatbox.

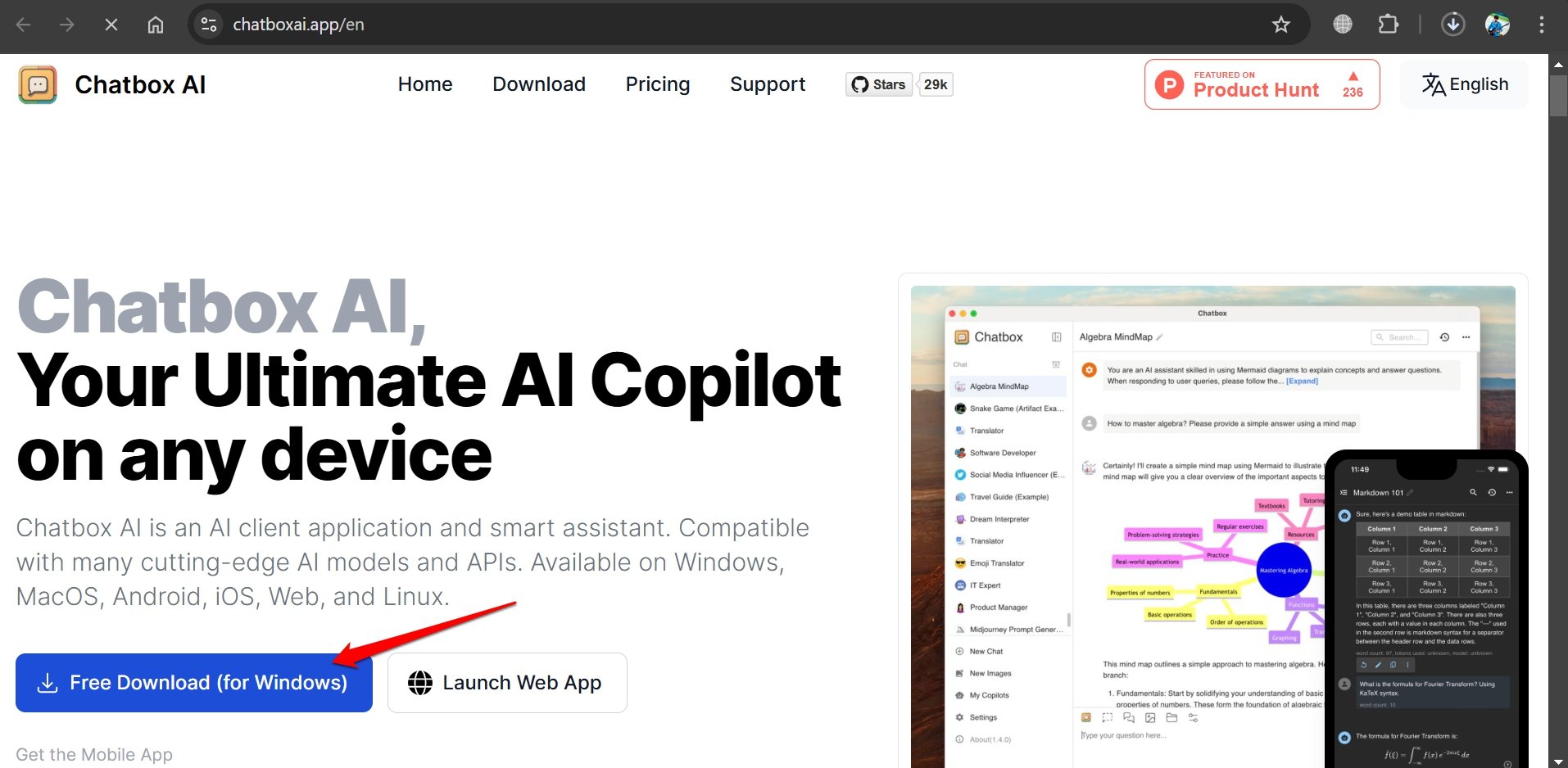

- Go to the Chatbox official website to download the app.

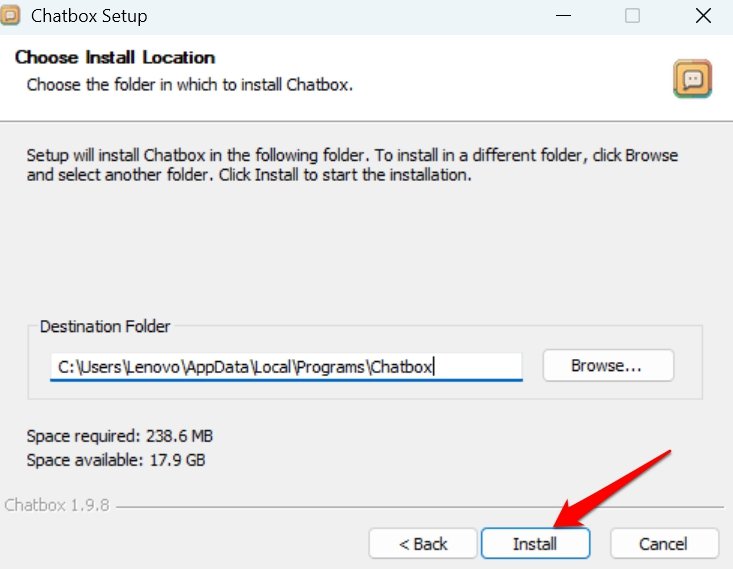

- Install Chatbox on your PC.

- Launch Chatbox.

- On the left panel, click Settings.

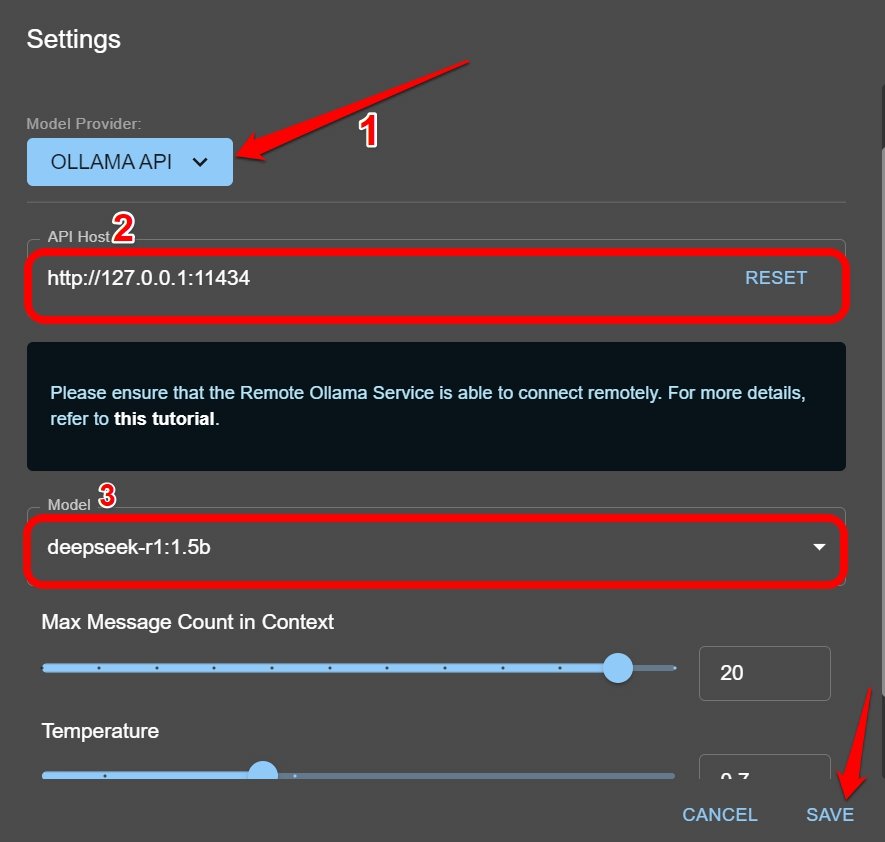

- Set the model provider as Ollama API.

- Change the API host to http://127.0.0.1:11434.

- Select the Deepseek model. [the same as the mode you downloaded, Deepseek-R1-Distill-Qwen with 1.5 B in this example]

- Press Save to confirm the changes.

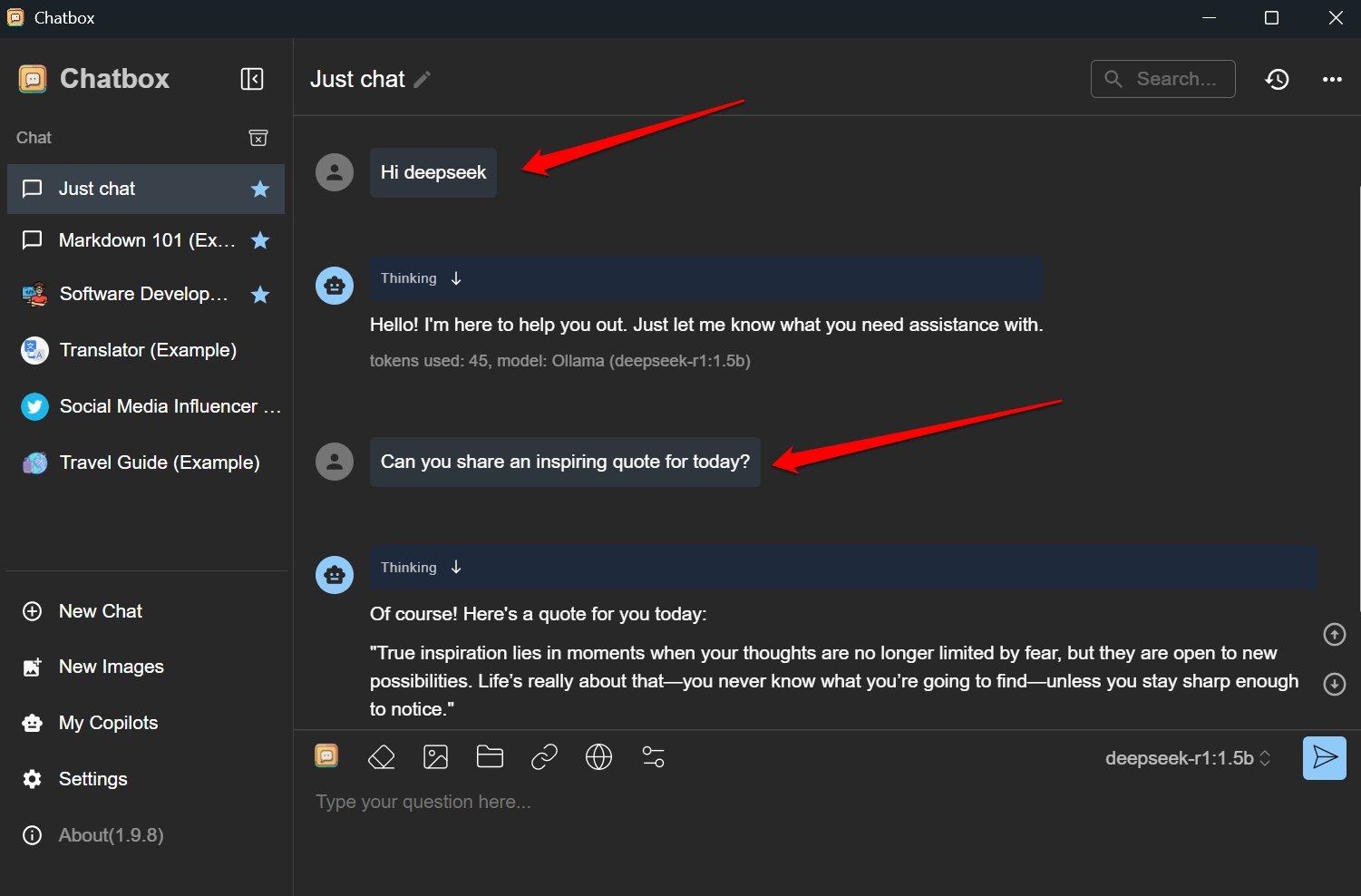

Now, you can start asking the AI model questions. It will answer them instantly. Enjoy.

Install Deepseek Locally Using Ollama with Docker

Here is another way to install Deepseek locally using Ollama and the docker container.

- Download and install Ollama on your PC. [refer to steps in the previous method]

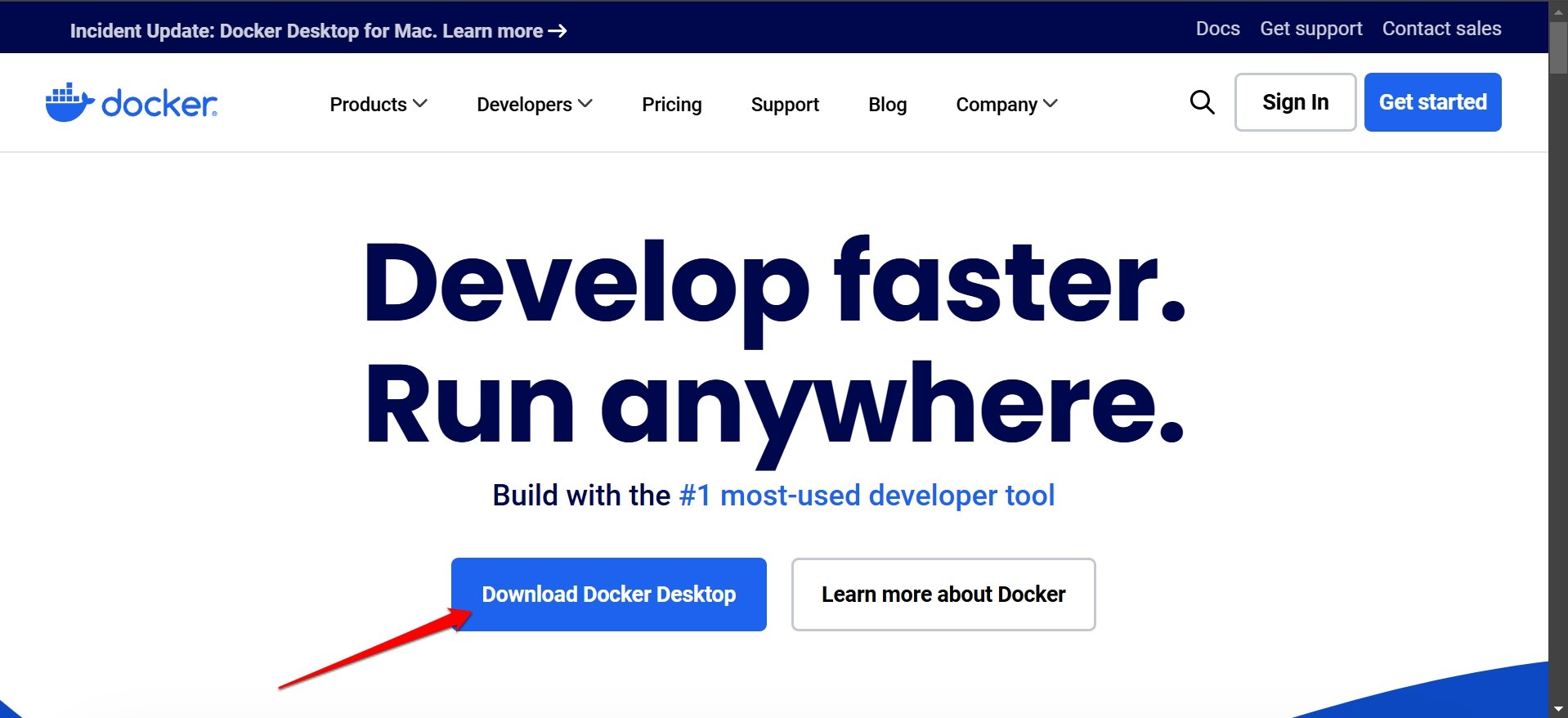

- Get the Docker Desktop for your respective operating system.

- Install and run Docker on your PC.

- Launch the Terminal app using Windows + S (windows search)

- To check if Docker is ready to be used, type the following,

docker --version

- Now, to fetch the Open WebUI image, enter the following command.

Docker pull ghcr.io/open-webui:main

- Enter this command to run the Docker container.

docker run-d -p 9783:8080 -v open-webui:/app/backend/data -name open -webui ghcr.io/open-webui/open-webui:main

- Now launch your browser once the Docker starts.

- Enter the following host in the URL bar.

http://localhost:9783/

- Click on Create Admin Account.

- Launch Terminal and run Ollama to download the Deepseek model using the following command.

Ollama run deepseek-r1:1.5b

- Refresh the OpenwebUI page on the browser.

- Select the Deepseek model you previously downloaded to chat with the AI model.

Bottom Line

Now you know how to install Deepseek locally on your PC.

Privacy concerns or bothersome network issues will not prevent you from using the AI model and exploring the chatbot’s capabilities.

All you need is robust RAM, storage, and GPU on your PC. You can then set up Deepseek on your computer and shoot your queries to the AI model.

If you've any thoughts on How to Install and Run Deepseek R1 Locally on your PC?, then feel free to drop in below comment box. Also, please subscribe to our DigitBin YouTube channel for videos tutorials. Cheers!